For fourteen years, I had a rotten little chihuahua named Ethel, who was a malevolent scheming weasel. I didn't realize it at the time, but learning to speak "malevolent scheming weasel" was great training for understanding the press releases that AWS puts out semi-regularly.

Today, the OpenAI polycule put out a whole swath of press releases. OpenAI itself led the charge with four distinct press releases. Microsoft put out a surprisingly defensive press release that didn't say much. Softbank found $30 billion from somewhere and they announced that. Nvidia didn't announce anything, as they were unable to get to their keyboards due to all the money piling up in their office. And of course, Amazon had their own, which is what I'll unpack for you today.

Let's Set the Stage

OpenAI announced a $110 billion funding round at a $730 billion pre-money valuation, which for those keeping score at home values them at roughly the GDP of the Netherlands, only with somehow even more windmills at which to tilt. SoftBank and NVIDIA are each putting in $30 billion, while Amazon offered up $50 billion to capture the headline. (Well, $15 billion now and $35 billion later "when certain conditions are met," but we'll get to that.) Additional investors are "expected to join as the round progresses," which is fundraising-speak for "the round isn't closed yet but we wanted the press cycle today." Notably absent from the investor list: Microsoft, who instead published a statement insisting everything is fine with the energy of someone whose partner just moved in with a roommate named Amazon.

In return for a pile of cash, Amazon gets three things:

First, it becomes the "exclusive third-party cloud distribution provider" for OpenAI Frontier, OpenAI's enterprise agent platform. "Third-party" is the key qualifier — it carves out Microsoft, which isn't third-party by virtue of its existing relationship. In fact, Microsoft takes pains to mention that Frontier will be hosted in Azure, despite it being hosted in Azure. (Note: this does suggest that without a specific carve-out, use of Frontier will not help you retire your AWS spend commitment, but "different rules" and all that, so who really knows. Check first before you rely on this!) So… will data for a Bedrock service reside in Azure? That's a hell of a caveat if so. The contortions everyone is going through here to respect the letter of an agreement between Microsoft and OpenAI while the relationship has very clearly soured are quite something.

Second, OpenAI will build custom models for Amazon's own consumer-facing products, which is Amazon quietly admitting Nova needs reinforcements, presumably after listening to one of the customers who tried to use it as a frontier model. Great, something to shove more ads in front of customers as the customer experience of using Amazon continues to deteriorate.

Third, and this is the big deal, Amazon and OpenAI are co-building a "Stateful Runtime Environment" that runs in Bedrock — a persistent orchestration layer for agents with memory, tool access, identity management, and state that carries across sessions. And the way the companies talk about this is the hidden gem of this entire issue: we see where the contractual lines are drawn with respect to Microsoft and OpenAI.

We now see in plain English (albeit with a slight ermine accent) that Microsoft has exclusive rights to stateless API hosting, which is why nobody but Azure hosts any of their models past their GPT-OSS open model. So to get around this, OpenAI and Amazon just invented a new product category called the "Stateful Runtime Environment" that, by definition, falls outside that exclusivity. This deal matters so much to Amazon that Andy Jassy basically trampled AWS CEO Matt Garman out of the way in his mad rush to the spotlight, as noted in today's CNBC appearance. To be fair, the orchestration problem is real, and this could be genuinely useful, but I sincerely doubt Amazon's ability to market it against customer problems that won't come across as, once again, belligerent and out of touch with reality.

Microsoft's joint statement hammers the word "stateless" repeatedly, which tells you exactly where the contractual boundary was drawn. Meanwhile, any stateless API calls that the Stateful Runtime makes under the hood still route through Azure — meaning Microsoft clips a coupon (and transits the data) on every transaction Amazon distributes. Amazon is paying (maybe) $50 (but definitely $15) billion to build a storefront where the back end runs on a competitor's infrastructure. Welcome, Amazon, to the experience that every one of your customers has. As Om Malik pointed out, Amazon is paying roughly 16x what Microsoft paid per percentage point of OpenAI, with none of Microsoft's exclusives. That's not just the cost of being late to AI, but rather the cost of showing up to the auction after OpenAI learned how to run one.

As always, the devil is in the details:

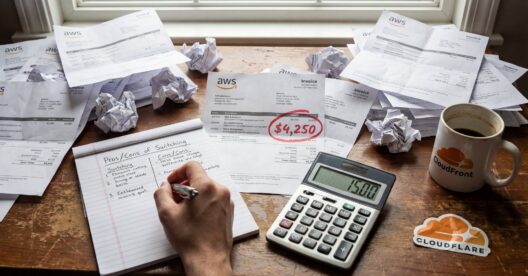

That $50 Billion Isn't Real

The fine print makes it clear that Amazon is in fact giving them $15 billion, with "another $35 billion in the coming months when certain conditions are met." Those conditions have not been disclosed, so I'm going to assume they include Sam Altman serenading both Andy Jassy and Matt Garman on the re:Invent keynote stage.

The bold print on the AWS press release says "OpenAI to consume 2 gigawatts of Trainium capacity through AWS infrastructure to support demand for Stateful Runtime Environment, Frontier, and other advanced workloads." I wonder how many terabyte pound-months that works out to. The press release also describes this as enabling enterprises to "consume intelligence on demand," which confirms that whatever brain worm infects AWS's prose stylists has consumed a fair bit of intelligence already, since the rest of us simply say "calling an API."

OpenAI has historically been all-in on using NVIDIA GPUs, which makes sense; they work, have overwhelming ecosystem support, and are available in every cloud vendor. (Well, if you're OpenAI. If you're you or me there will be "capacity constraints" most places.) Trainium is untested at scale; the only notable public reference customers have been Anthropic (to whom Amazon has likewise thrown billions of dollars) and Apple (who give public endorsements about as frequently as Siri gives a useful answer on the first try). This suggests that either taking the Trainium capacity means OpenAI can then unlock other contractual benefits, or else they're so desperate for GPU-alikes that they're prepared to run their workloads on anything with a power cord. My sense is that both are likely true. NVIDIA's announcement mentions that they're providing 5GW of capacity to OpenAI as a part of today's announcement. That relegates the Trainium announcement to "hobby project," more or less. Heck, they talk about "Trainium4, which is due in 2027" so suddenly the "forward looking statements" disclaimer at the end that rivals the length of the rest of the announcement goes from "covering their bases" to "absolutely critical." A chunk of this commitment is for silicon which hasn't yet been fabricated.

Remember the $38 Billion Commitment?

"OpenAI and AWS are expanding their existing $38 billion multi-year agreement by $100 billion over 8 years" quoth the press release, and $138 billion in AWS commitment over a ten year span is… certainly something. Is that a hard commit, or an "up to" number? Is it "use it or lose it," or is it subject to ongoing adjustment and "$100 billion" plays nicely for the cameras because Amazon was tired of being the smallest hyperscaler commitment OpenAI had announced? What happens if Trainium4 underperforms ("wait until you see Trainium5!") and OpenAI wants to shift workloads back to NVIDIA? The press release is frustratingly silent on exit clauses.

So Now What?

Does the stateful runtime service that doesn't yet technically exist count against your AWS contractual commitments? How will OpenAI's models on Bedrock be priced relative to Anthropic given the Azure passthrough? What does this mean for customers choosing between Azure OpenAI and Bedrock Anthropic? Is the passing mention of "AgentCore integration" a sign that AgentCore is being built upon, or quietly replaced? And how does Anthropic feel about their Bedrock co-tenancy situation now that Amazon's spent six times as much on the new roommate?

We're going to find out.